48.8566° N, 2.3522° E

// COMPLEXITY

MACHINE LEARNING

Building efficient transformer architectures with Mu-Guided Dynamics and Token-Routed MLP

// PROJECTS

What We're Building

1.5B parameter language model trained with Complexity-Deep architecture. Mu-guided attention and token-routed experts.

Our latest submission on OpenReview. Token-Routed MLP with Mu-Guided Dynamics for efficient transformer architectures.

// INFERENCE

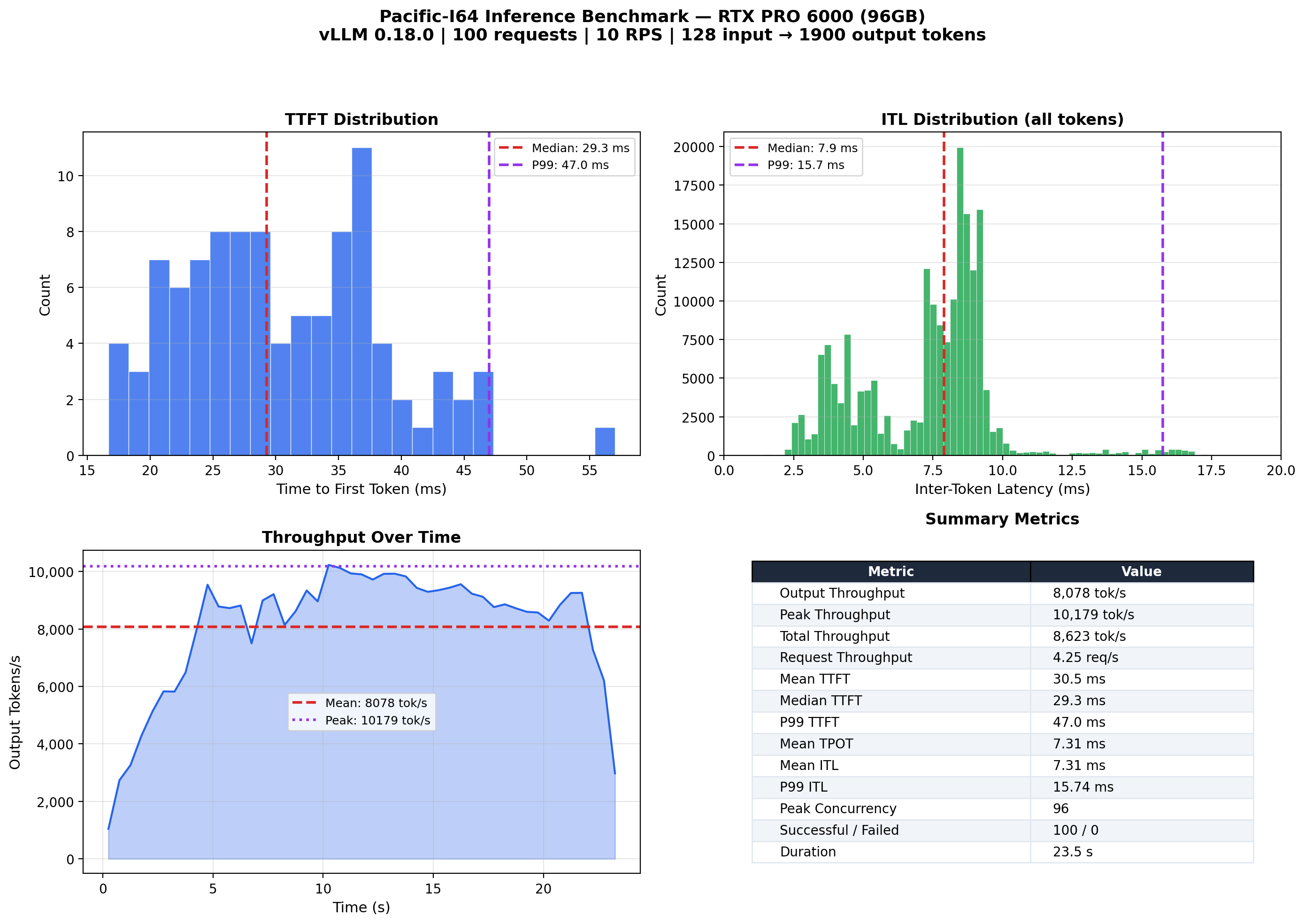

vLLM Benchmark

187M Token-Routed model served via vLLM 0.18 with PagedAttention and CUDA graphs. Deterministic token routing is natively compatible with CUDA graph capture, eliminating CPU-GPU synchronizations required by learned-router MoE architectures.

// EXPERT ANALYSIS

Expert t-SNE 3D

Interactive 3D t-SNE of mean expert activations per layer (MLP output). Rotate, zoom and hover to explore expert specialization across layers.

// PUBLICATIONS

Research

COMPLEXITY-DEEP: A Language Model Architecture with Mu-Guided Attention and Token-Routed MLP

Anonymous

Submitted to Transactions on Machine Learning Research • 2026

We present COMPLEXITY-DEEP, a language model architecture introducing Token-Routed MLP with Zipf-balanced bin-packing routing, Mu-Guided Attention for inter-layer communication, and a Shared Lexical Expert. Under review at TMLR.

Cite Our Work

@article{

anonymous2026complexitydeep,

title={'{COMPLEXITY}-{DEEP}: A Language Model Architecture with Mu-Guided Attention and Token-Routed {MLP}'},

author={Anonymous},

journal={Submitted to Transactions on Machine Learning Research},

year={2026},

url={https://openreview.net/forum?id=jZq6EVboC6},

note={Under review}

}// ABOUT

Our Mission

Complexity-ML is dedicated to developing efficient and innovative transformer architectures. Our research focuses on making large language models more accessible through novel routing mechanisms and dynamics-inspired control systems.

Mu-Guided Dynamics

Learned mu projection that maintains context across layers through clamped scaling and linear adaptation.

Token-Routed MLP

Deterministic expert routing based on token identity. Perfect load balance without routing collapse.

CGGR Kernels

Custom Triton kernels for contiguous group GEMM routing. 5-6x speedup over naive implementations.